|

To dump the generated programs, use the environment variable XLA provides introspection facilities which let you inspect the generated TensorFlow graph into executable code (for x86-64 CPU only). You can also use a standalone tfcompile tool, which converts AOT (Ahead-of-time) compilation for CPU with tfcompile Note: Auto-clustering support on CPU and on multi-GPU environments isĪuto-clustering tutorial colab. $ TF_XLA_FLAGS=-tf_xla_auto_jit=2 path/to/your/tf/programĪuto-clustering is currently optimized for GPU workloads, but it can also beĮnabled on CPU by additionally using the flag -tf_xla_cpu_global_jit: $ TF_XLA_FLAGS="-tf_xla_auto_jit=2 -tf_xla_cpu_global_jit" path/to/your/program Auto-clustering on GPU can be enabled by setting the TF_XLA_FLAGSĮnvironment variable: Note: In TF2, only the code inside tf.function will be clustered. Subgraphs) within the TensorFlow functions which can be compiled and executed Return ctx.all_reduce(tf., run_fn():Ī simple way to start using XLA in TensorFlow models without any changes is toĮnable auto-clustering, which automatically finds clusters (connected T = tf.ones(shape=, dtype=tf.float32)Ĭtx = tf.distribute.get_replica_context() XLA:GPU can be used with TF distributed strategyīy annotating step function with jit_compile=True: step_fn(): pile: pile(optimizer="adam", jit_compile=True) See the tutorial colab for a more detailedįor Keras models, jit_compile=True can be set as an argument to Note: Nesting behavior: the function will be compiled if at least one function Recompiled_on_launch(tf.ones(), tf.ones()) Shapes can vary across the runs though: recompiled_on_launch(a, b): For example, the followingįunction will not compile: not_compilable(x): Tensors without running the entire computation. Inferrable: that is, if it's not possible to infer the dimensions of all XLA can not currently compile functions where dimensions are not The jit_compile API has must-compile semantics: either the entireįunction is compiled with XLA, or an errors.InvalidArgumentError exception is Optimizer.apply_gradients(zip(grads, layer_variables)) Loss = tf.reduce_mean(tf.nn.sparse_softmax_cross_entropy_with_logits( Which performs the MNIST training is compiled with XLA: train_mnist(images, labels): For example, the following TensorFlow function Enable XLA for TensorFlow models Explicit compilation with tf.function(jit_compile=True)Įxplicit compilation API offers a fine-grained control for choosing whichįunctions should be compiled. Removing memory operations is one of the best ways to improve performance. Memory bandwidth is typically the scarcest resource on hardware accelerators, so Fusion is XLA's single most important optimization. These intermediate computations directly to their users while keeping themĮntirely in GPU registers. Produced by y*z and x+y*z to memory instead it "streams" the results of Moreover, this fused operation does not write out the intermediate values "fusing" the addition, multiplication and reduction into a single GPU kernel.

Graph so that it computes the result in a single kernel launch. One for the addition and one for the reduction.

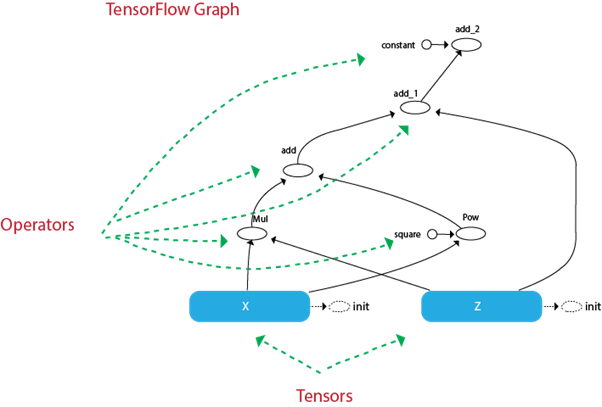

Run without XLA, the graph launches three kernels: one for the multiplication, Optimization XLA does in the context of a simple TensorFlow computation: def model_fn(x, y, z): Model-specific information for optimization. Because these kernels are unique to the model, they can exploit Graph into a sequence of computation kernels generated specifically for the XLA provides an alternative mode of running models: it compiles the TensorFlow Precompiled GPU kernel implementation that the executor dispatches to. tensorflow/cc/ops/math_ops.h) to hand write our own classes if we only have a few custom OPs.When a TensorFlow program is run, all of the operations are executed Note: For TensorFlow inherent OP, code like ZeroOut class is autogenerated by bazel rule. Take ZeroOut from here as example, you have to do the following class ZeroOut ) Īuto v = ZeroOut(root.WithOpName("v"), A)

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed